背景:

我的集群是通过kubeadm部署的etcd单节点,现需扩容成3节点,达到高可用的目的。

步骤:

1.下载并配置etcdctl

wget https://github.com/etcd-io/etcd/releases/download/v3.5.2/etcd-v3.5.2-linux-amd64.tar.gz

tar xvf etcd-v3.5.2-linux-amd64.tar.gz

cd etcd-v3.5.2-linux-amd64

cp etcdctl /usr/sbin

我的etcd版本是3.5,如果是3.4以下,需要设置

export ETCDCTL_API=3

设置别名

echo "alias etcdctl='etcdctl --endpoints=https://[127.0.0.1]:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/healthcheck-client.crt --key=/etc/kubernetes/pki/etcd/healthcheck-client.key'" >> /root/.bashrc

source /root/.bashrc

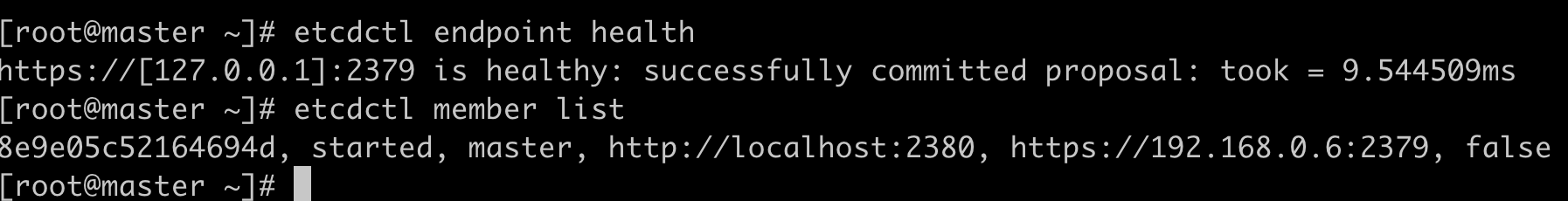

查看集群状态

etcdctl member list

查看endpoint状态

etcdctl endpoint status

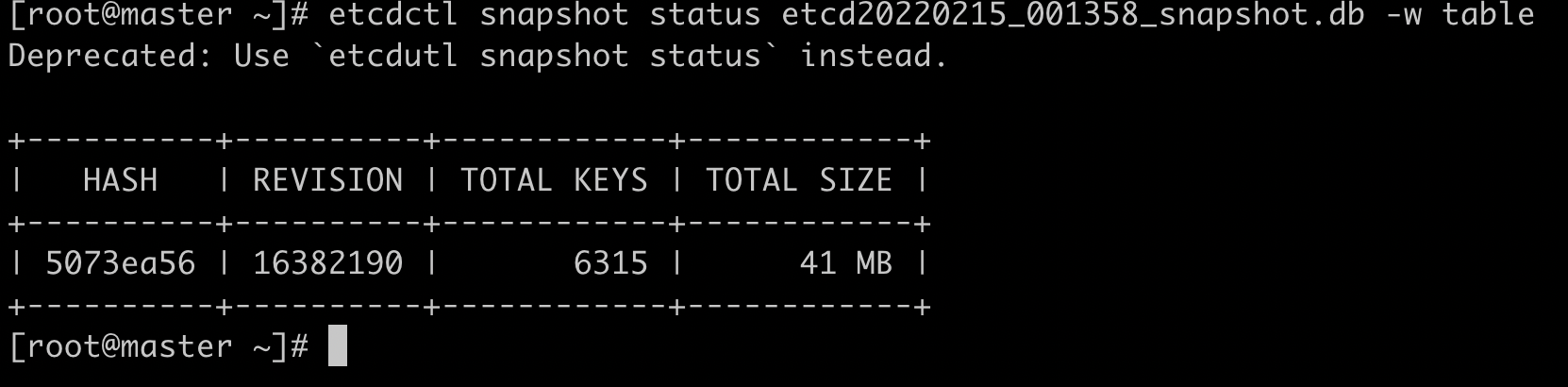

2.备份etcd

扩容过程中,需要将原来的etcd库删除,会导致kubernetes集群的master节点信息丢失。

因此在扩容之前,建议使用etcdctl snapshot命令进行备份。或者另建etcd节点,将原来的数据传送过去。这里使用snapshot备份。

etcdctl snapshot save /root/etcd$(date +%Y%m%d_%H%M%S)_snapshot.db

ll etcd20220215_001358_snapshot.db

如需恢复原有集群,使用如下命令:

etcdctl --data-dir=/var/lib/etcd \

--initial-advertise-peer-urls=https://192.168.0.6:2380 \

--initial-cluster=master=https://192.168.0.6:2380 \

--name=master \

snapshot restore etcd20220215_001358_snapshot.db3.生成并复制证书

安装cfssl

curl -s -L -o /usr/bin/cfssl https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

curl -s -L -o /usr/bin/cfssljson https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64用以下json文件来生成证书。

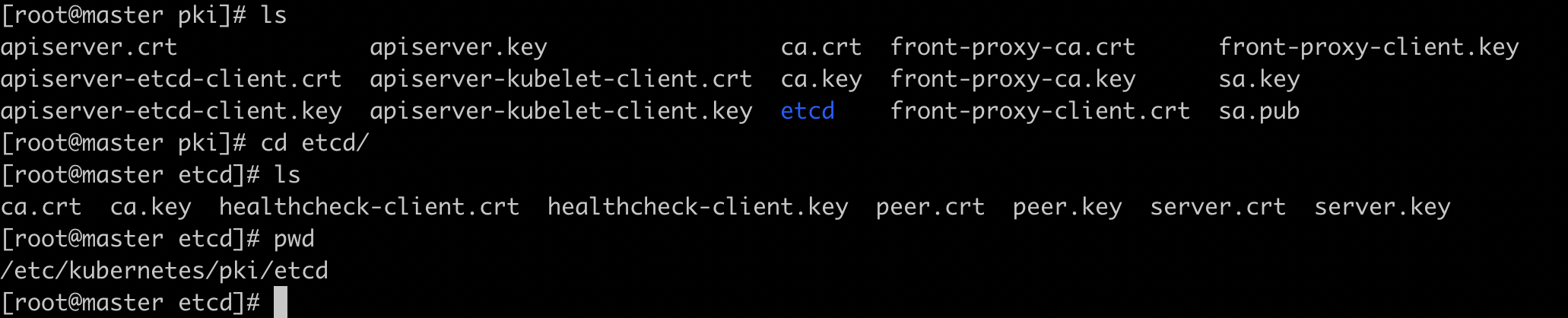

cd /etc/kubernets/pki/etcd

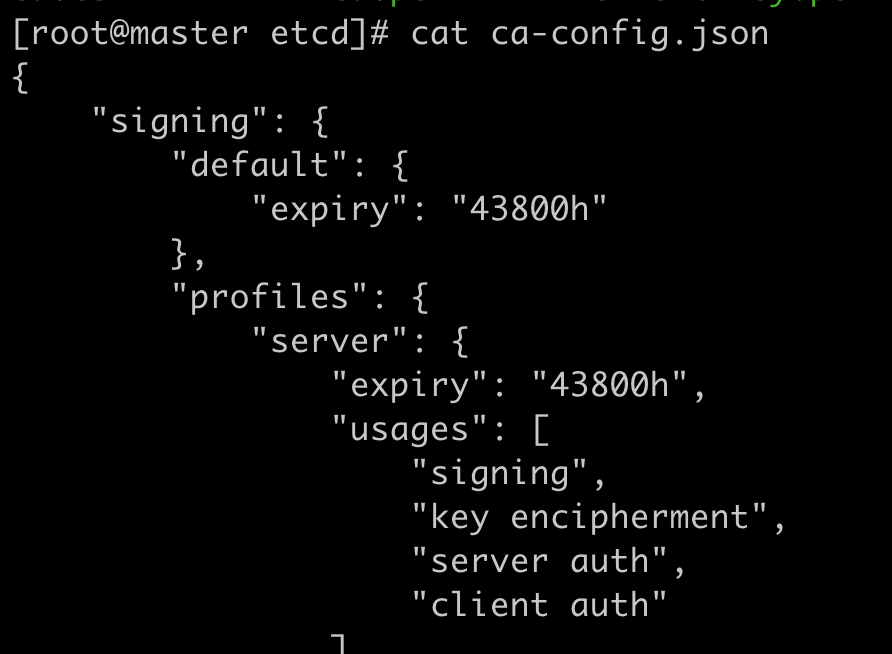

cat ca-config.json

{

"signing": {

"default": {

"expiry": "43800h"

},

"profiles": {

"server": {

"expiry": "43800h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "43800h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "43800h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

cat ca-csr.json

{

"CN": "My own CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "US",

"L": "CA",

"O": "My Company Name",

"ST": "San Francisco",

"OU": "Org Unit 1",

"OU": "Org Unit 2"

}

]

}

cat server.json

{

"CN": "etcd0",

"hosts": [

"127.0.0.1",

"192.168.0.6",

"192.168.1.151",

"192.168.1.25"

],

"key": {

"algo": "ecdsa",

"size": 256

},

"names": [

{

"C": "US",

"L": "CA",

"ST": "San Francisco"

}

]

}

cat member1.json # 填本机IP

{

"CN": "etcd0",

"hosts": [

"192.168.0.6"

],

"key": {

"algo": "ecdsa",

"size": 256

},

"names": [

{

"C": "US",

"L": "CA",

"ST": "San Francisco"

}

]

}

cat client.json

{

"CN": "client",

"hosts": [

""

],

"key": {

"algo": "ecdsa",

"size": 256

},

"names": [

{

"C": "US",

"L": "CA",

"ST": "San Francisco"

}

]

}生成证书

生成前请看坑1(-.-!)

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server server.json | cfssljson -bare server

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer member1.json | cfssljson -bare member1

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client.json | cfssljson -bare client将master节点的/etc/kubernetes/pki目录复制到子节点。

scp -r /etc/kubernetes/pki 192.168.1.25:/etc/kubernetes/

scp -r /etc/kubernetes/pki 192.168.1.151:/etc/kubernetes/

在子节点上修改member1.json中的ip,重新生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer member1.json | cfssljson -bare member14.停止apiserver和etcd

mv /etc/kubernetes/manifests /etc/kubernetes/manifests_bak5.添加节点到etcd集群

添加node1到etcd集群

etcdctl member add node1 --peer-urls=https://192.168.1.151:2380

添加node2到etcd集群

etcdctl member add node2 --peer-urls=https://192.168.1.25:2380

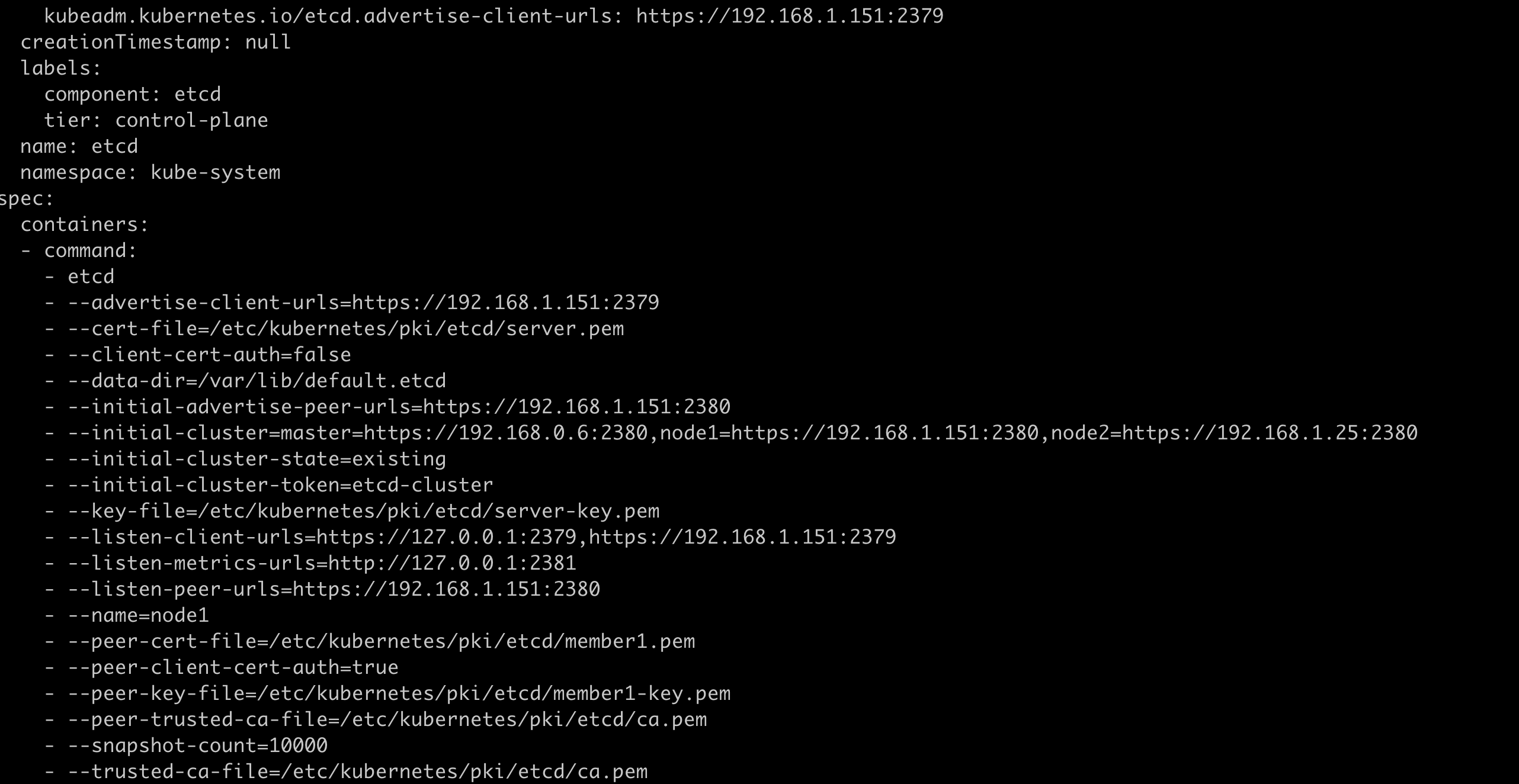

6.复制并编辑etcd.yaml

将etcd.yaml文件放入各个子节点的/etc/kubernetes/manifests目录下,在kubelet启动时将会自动启动,/etc/kubernetes/manifests下的所有*.yaml实例为静态pod。

scp /etc/kubernetes/manifests/etcd.yaml 192.168.1.151:/etc/kubernetes/manifests/

scp /etc/kubernetes/manifests/etcd.yaml 192.168.1.25:/etc/kubernetes/manifests/

在node1和node2上

vim /etc/kubernetes/manifest/etcd.yaml

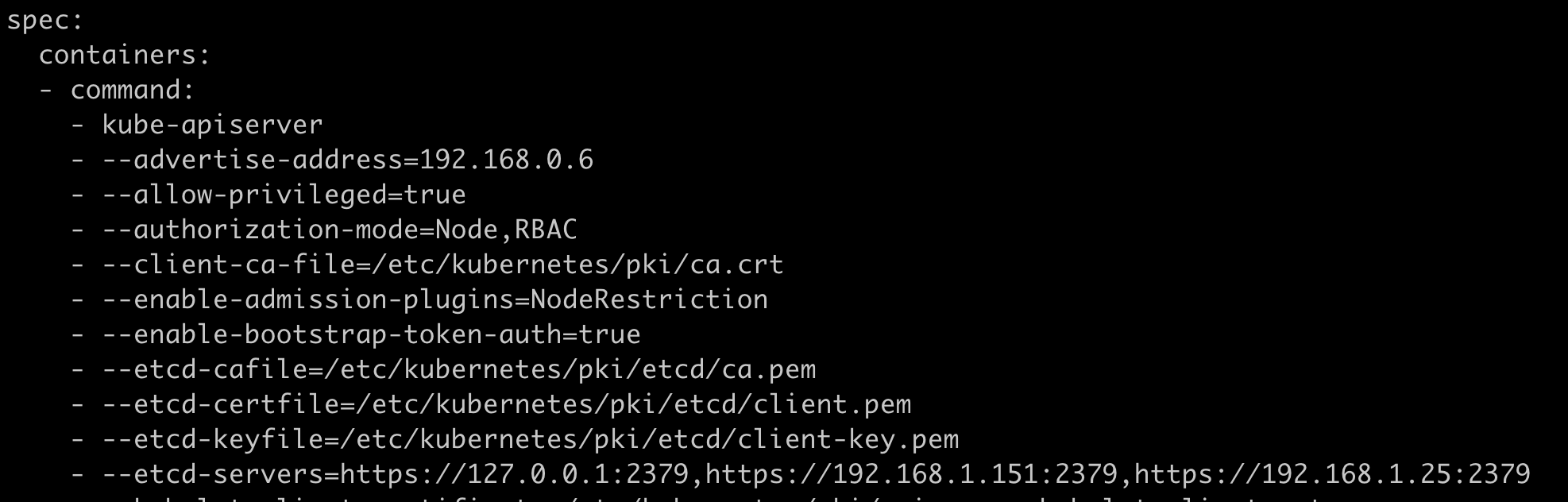

7.编辑kube-apiserver.yaml

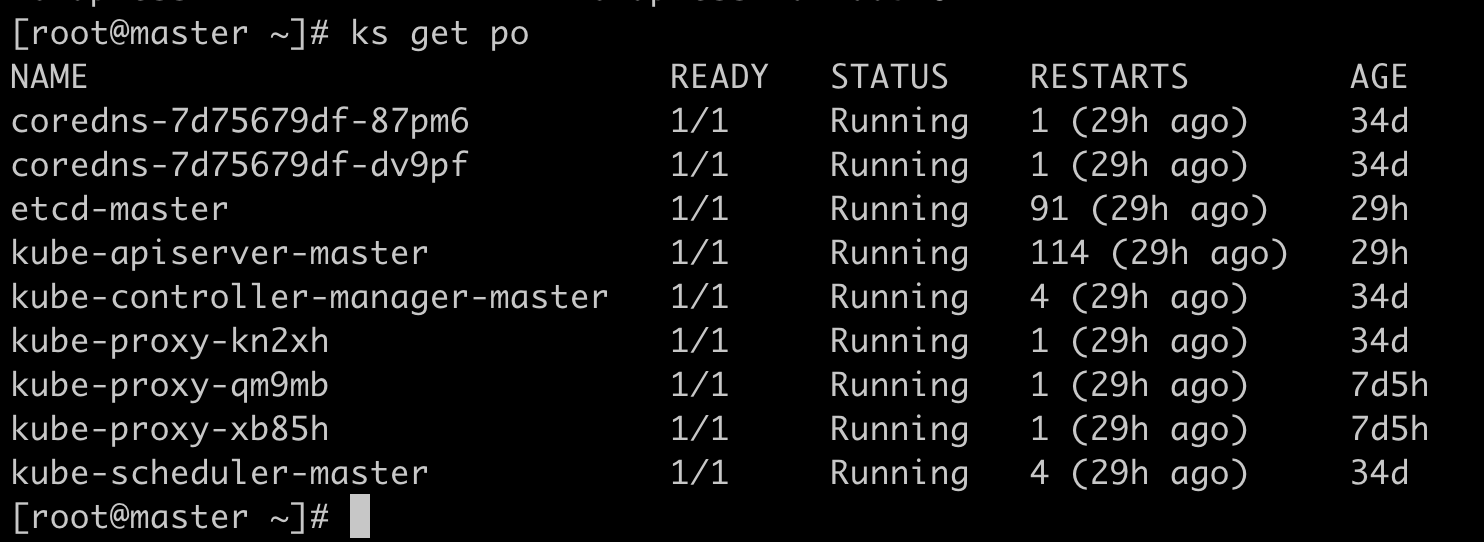

8.开启apiserver和etcd

mv /etc/kubernetes/manifests_bak /etc/kubernetes/manifests9.查看etcd集群状态

etcdctl member list

etcdctl endpoing health坑

1.etcd日志报错certificate specifies an incompatible key usage

WARNING: 2022/02/15 13:20:30 grpc: addrConn.createTransport failed to connect to {0.0.0.0:2379 <nil> 0 <nil>}. Err: connection error: desc = "transport: authentication handshake failed: remote error: tls: bad certificate". Reconnecting...

{"level":"warn","ts":"2022/02/15T13:20:30.516+0800","caller":"embed/config_logging.go:198","msg":"rejected connection","remote-addr":"127.0.0.1:47329","server-name":"","error":"tls: failed to verify client certificate: x509: certificate specifies an incompatible key usage"}

原因:

ca-config.json中的server-usages参数中缺少client auth。

ca.crt不止用于server认证也用于client认证。

解决:

修改ca-config.json,添加client auth

2.cluster ID mismatch

原因:

etcd这个报错是因为data-dir目录没有清空,有缓存导致。

解决:

停止删除etcd容器,清空或删除目录,重新生成etcd pod。

3.member 2ce221743acad866 has already been bootstrapped

原因:

当前节点已经添加过集群,etcd配置文件中如果没有配置的话,默认 –initial-cluster-state是new。

解决:

修改etcd.yaml,添加如下字段,删除数据目录,重新生成pod。

--initial-cluster-state=existing4.x509:certificate signed by unknown authority

原因:

具体原因未知,报错是证书不信任,但是我把证书文件都添加到/etc/ssl/certs/ca-bundle.trust.crt中了。

解决:

https://github.com/etcd-io/etcd/blob/e205d09895e6e9d810a88923a64104474002c0c4/Documentation/op-guide/security.md#example-1-client-to-server-transport-security-with-https

在etcd启动文件中添加 –peer-auto-tls 字段重新生成pod就好了,离谱。

回滚

我这里扩容成功后,restore snapshot没有报错,但是集群没有恢复,查看snapshot status只有2.4m,而备份有41m,不知道是哪里的问题,重新恢复了几次也没有成功。。。估计是扩容有问题生成了一个新的集群,exiting参数也加了。。。只好回滚,再重新扩容了。

分别在各个节点上创建备份目录,停止apiserver和etcd,回滚etcd.yaml和apiserver.yaml,清空etcd数据目录。修改etcdctl环境变量。

etcdctl snapshot restore恢复如果不指定数据目录的话会在当前目录生成一个default.etcd目录,移动 default.etcd/member 到数据目录。注意数据目录的权限必须是700,不然etcd会报错。

重新启动apiserver和etcd。

恢复成功。

回滚后如果有多个网卡,calico会未达终态。

参考这篇文章:

https://wghdr.top/archives/97